library(dplyr)

library(tidyverse)

library(tibble)

library(ggplot2)

library(knitr)

library(lmtest)

library(caret)

library(glmtoolbox)

library(predtools)

library(pROC)

library(car)

library(devtools)

#using data specified in this github repository:

install_github("jhs-hwg/cardioStatsUSA")

library(cardioStatsUSA)4 Model Diagnostics

#to prevent errors, exclude the rows with na:

used_vars = c('cc_diabetes', 'bp_sys_mean', 'demo_age_years', 'demo_race', 'demo_gender', 'cc_bmi', 'cc_smoke', 'bp_med_use')

clean_nhanes <- nhanes_data[complete.cases(nhanes_data[,..used_vars]), ]

null_model <- glm(cc_diabetes ~ 1, data = clean_nhanes, family=binomial)

simple_diab_model_sys <- glm(cc_diabetes ~ bp_sys_mean, data = clean_nhanes, family = binomial)

full_diab_model_sys <- glm(cc_diabetes ~ bp_sys_mean + demo_age_years + demo_race + demo_gender+ cc_bmi + cc_smoke + bp_med_use, data = clean_nhanes, family = binomial)The next step will be to evaluate the model to determine ______.

4.1 Likelihood Ratio Test

The likelihood ratio test involves comparing whether two models are significantly different. Here, we will compare our full model with the null model (no covariates):

The null hypothesis is that there is no significant difference between the null model \(H_0\) and the full model \(H_1\).

The test compares the predicted likelihood of an observed outcome under the null model vs the predicted likelihood of the outcome under the model. In this case, the outcome will be diabetes. The test statistic will be calculated \(\lambda = -2 log(\frac{L(H_0)}{L(H_1)})\), where L denotes the likelihood of diabetes evaluated under each model. If the likelihood is much higher in the full model than in the null model, it means that additional parameters improve the model fit. As a result, \(\lambda\) and its corresponding p value will be a much smaller, providing stronger evidence that we may need to reject the null hypothesis.

lrtest(full_diab_model_sys, null_model)| #Df | LogLik | Df | Chisq | Pr(>Chisq) |

|---|---|---|---|---|

| 14 | -16527.27 | NA | NA | NA |

| 1 | -20638.95 | -13 | 8223.36 | 0 |

As expected, we can see that the full model is significant compared to the null hypothesis. We can also compare it to the simple model which uses only systolic blood pressure as a variable.

lrtest(full_diab_model_sys, simple_diab_model_sys)| #Df | LogLik | Df | Chisq | Pr(>Chisq) |

|---|---|---|---|---|

| 14 | -16527.27 | NA | NA | NA |

| 2 | -19943.85 | -12 | 6833.152 | 0 |

4.2 Model Fit

Let’s evaluate the accuracy of our model: We first calculate the predicted probabilities using a cutoff probability of 0.5 with our model. Then, we compare these predictions to the references and find our accuracy.

pred_probs <- predict(full_diab_model_sys, type="response")

pred_ys <- ifelse(pred_probs >0.5, 1, 0)

clean_nhanes$cc_diabetes_num <- ifelse(clean_nhanes$cc_diabetes == "Yes", 1, 0)

table(clean_nhanes$cc_diabetes_num, pred_ys) pred_ys

0 1

0 44302 859

1 6167 869confusionMatrix(as.factor(pred_ys),as.factor(clean_nhanes$cc_diabetes_num), positive = '1')Confusion Matrix and Statistics

Reference

Prediction 0 1

0 44302 6167

1 859 869

Accuracy : 0.8654

95% CI : (0.8624, 0.8683)

No Information Rate : 0.8652

P-Value [Acc > NIR] : 0.4522

Kappa : 0.1533

Mcnemar's Test P-Value : <2e-16

Sensitivity : 0.12351

Specificity : 0.98098

Pos Pred Value : 0.50289

Neg Pred Value : 0.87781

Prevalence : 0.13480

Detection Rate : 0.01665

Detection Prevalence : 0.03311

Balanced Accuracy : 0.55224

'Positive' Class : 1

pred_probs_simple <- predict(simple_diab_model_sys, type="response")

pred_ys <- ifelse(pred_probs >0.5, 1, 0)

clean_nhanes$cc_diabetes_num <- ifelse(clean_nhanes$cc_diabetes == "Yes", 1, 0)

table(clean_nhanes$cc_diabetes_num, pred_ys) pred_ys

0 1

0 44302 859

1 6167 869confusionMatrix(as.factor(pred_ys),as.factor(clean_nhanes$cc_diabetes_num), positive = '1')Confusion Matrix and Statistics

Reference

Prediction 0 1

0 44302 6167

1 859 869

Accuracy : 0.8654

95% CI : (0.8624, 0.8683)

No Information Rate : 0.8652

P-Value [Acc > NIR] : 0.4522

Kappa : 0.1533

Mcnemar's Test P-Value : <2e-16

Sensitivity : 0.12351

Specificity : 0.98098

Pos Pred Value : 0.50289

Neg Pred Value : 0.87781

Prevalence : 0.13480

Detection Rate : 0.01665

Detection Prevalence : 0.03311

Balanced Accuracy : 0.55224

'Positive' Class : 1

Our model has a final accuracy of about 86%, and mostly misclassifies those with diabetes as without (false negatives).

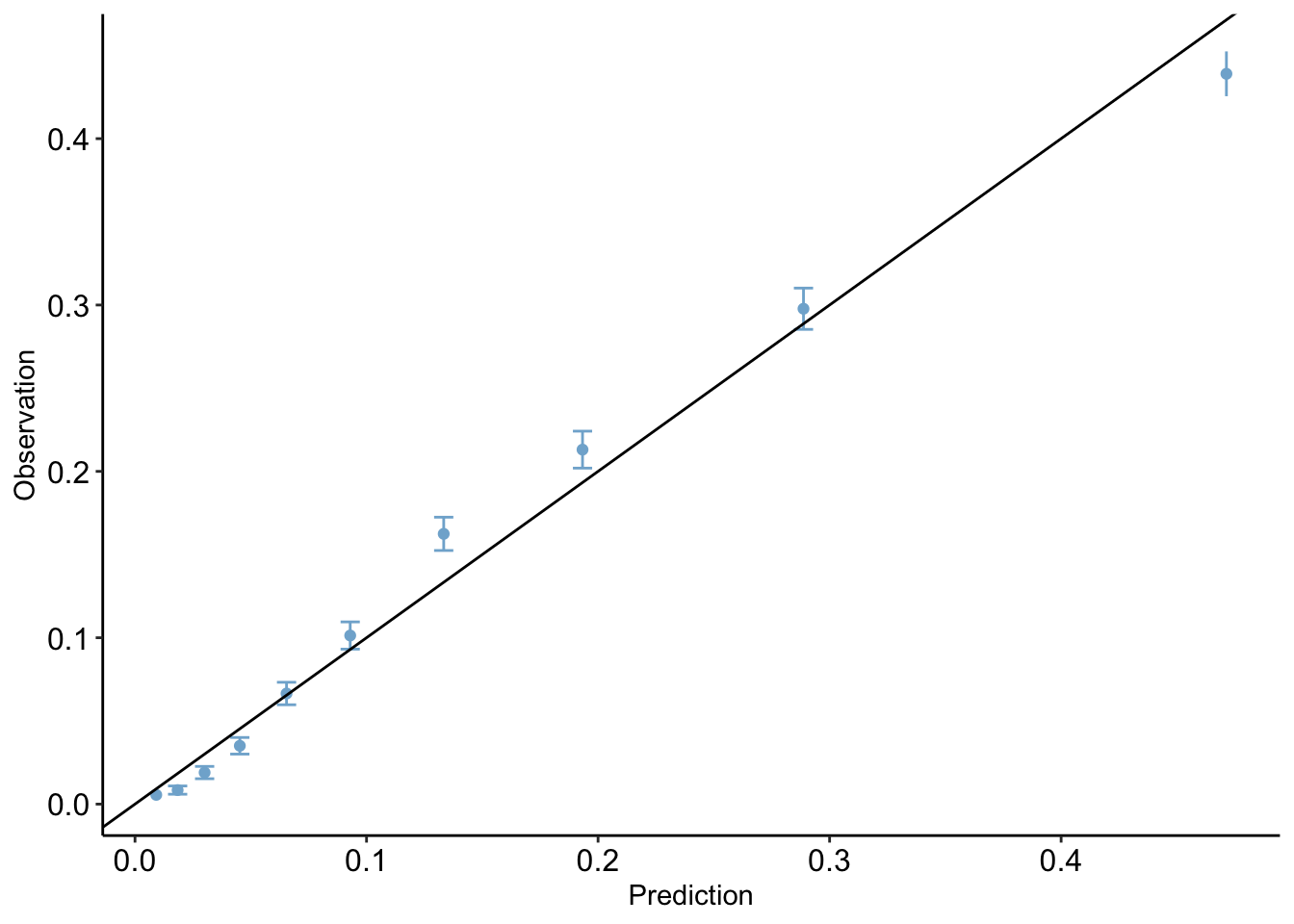

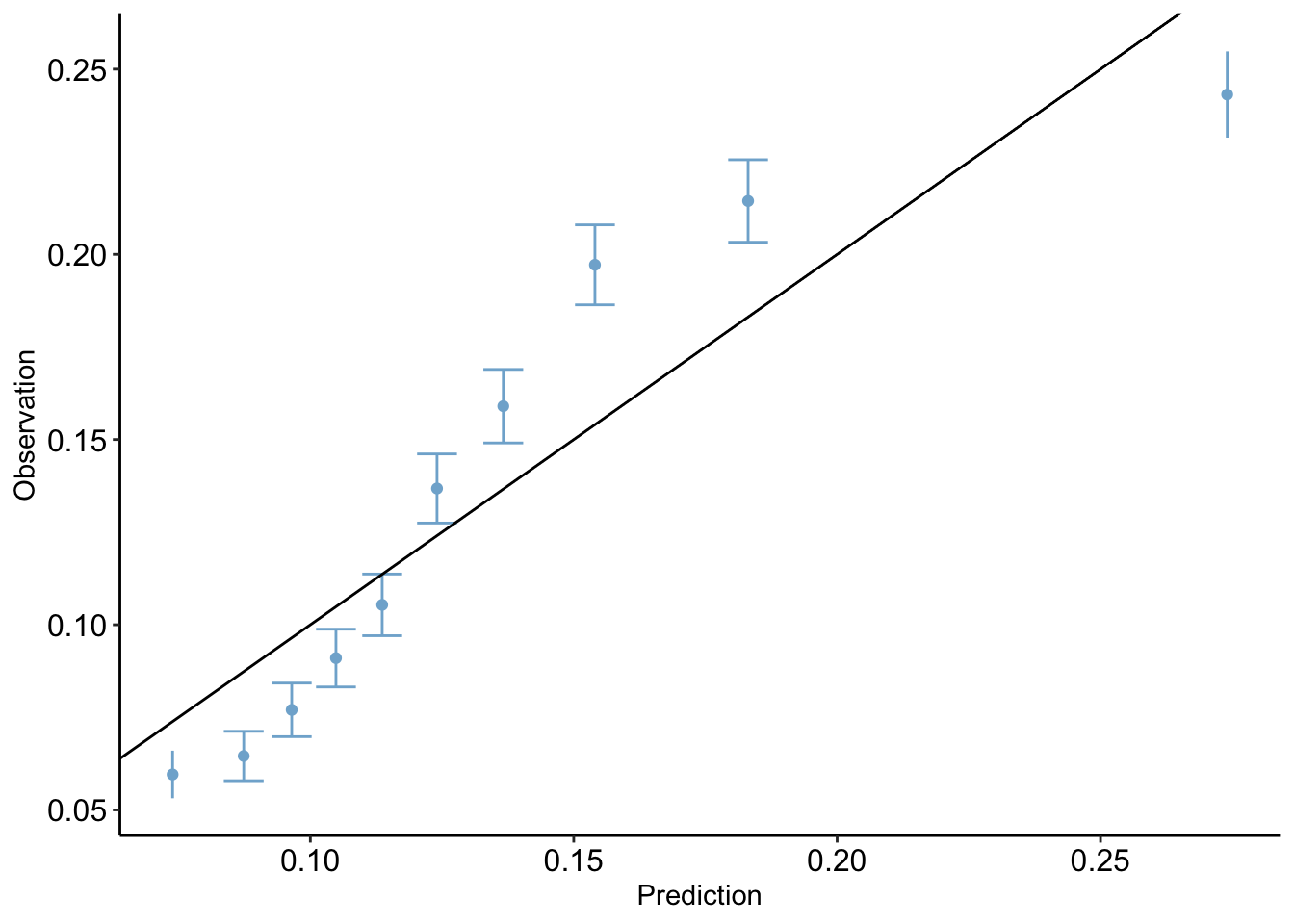

4.3 Calibration Plot

The calibration plot compares predicted probabilities to observed probabilities. Essentially, it will compare the data points for which the model predicted probabilities in a certain range and the true observed proportion of these data points which were predicted positives. Ideally, a model will fit close to the line x=y: this would imply that the model’s predictions align with the actual outcome. For example, if our model assigns 100 data points a predicted probability value between 0 and 0.1, and 10 of those points is a positive, then our model fits the data quite well.

clean_nhanes$pred <- pred_probs

calibration_plot(data = clean_nhanes, obs = "cc_diabetes_num", pred = "pred")$calibration_plot

clean_nhanes$pred_simple <- pred_probs_simple

calibration_plot(data = clean_nhanes, obs = "cc_diabetes_num", pred = "pred_simple")$calibration_plot

Brier Test

The Brier Score is a value which measures the accuracy of the model to the data. In this case, it is equal to mean-squared error.

mean((clean_nhanes$cc_diabetes_num - pred_probs)^2)[1] 0.09791126mean((clean_nhanes$cc_diabetes_num - pred_probs_simple)^2)[1] 0.11374314.4 Hosmer-Lemeshow Test

The Homser-Lemeshow test divides the data into 10 different subgroups of equal size, each with increasing risk for positive prediction, or diabetes in our case. The observed number of those with diabetes in the each group is compared with the expected number based on the model’s prediction.

hltest(full_diab_model_sys)

The Hosmer-Lemeshow goodness-of-fit test

Group Size Observed Expected

1 5220 29 47.93194

2 5220 44 96.09769

3 5220 99 156.96748

4 5220 183 236.32193

5 5220 347 341.67554

6 5220 529 485.18466

7 5220 848 696.01150

8 5220 1112 1009.00717

9 5220 1554 1507.32695

10 5217 2291 2459.47515

Statistic = 150.6378

degrees of freedom = 8

p-value = < 2.22e-16 hltest(simple_diab_model_sys)

The Hosmer-Lemeshow goodness-of-fit test

Group Size Observed Expected

1 5310 320 392.900614

2 5205 336 455.497648

3 4905 382 472.429670

4 5211 470 544.366735

5 5159 547 583.643016

6 5283 707 652.225758

7 5229 818 710.886418

8 5410 1057 830.456544

9 5260 1129 962.117074

10 5220 1269 1427.789578

11 5 1 3.686982

Statistic = 246.1812

degrees of freedom = 9

p-value = < 2.22e-16 In our model, we can see that the expected values are somewhat higher than the observed values in the lower proportion groups, however the fit seems to generally be accurate.

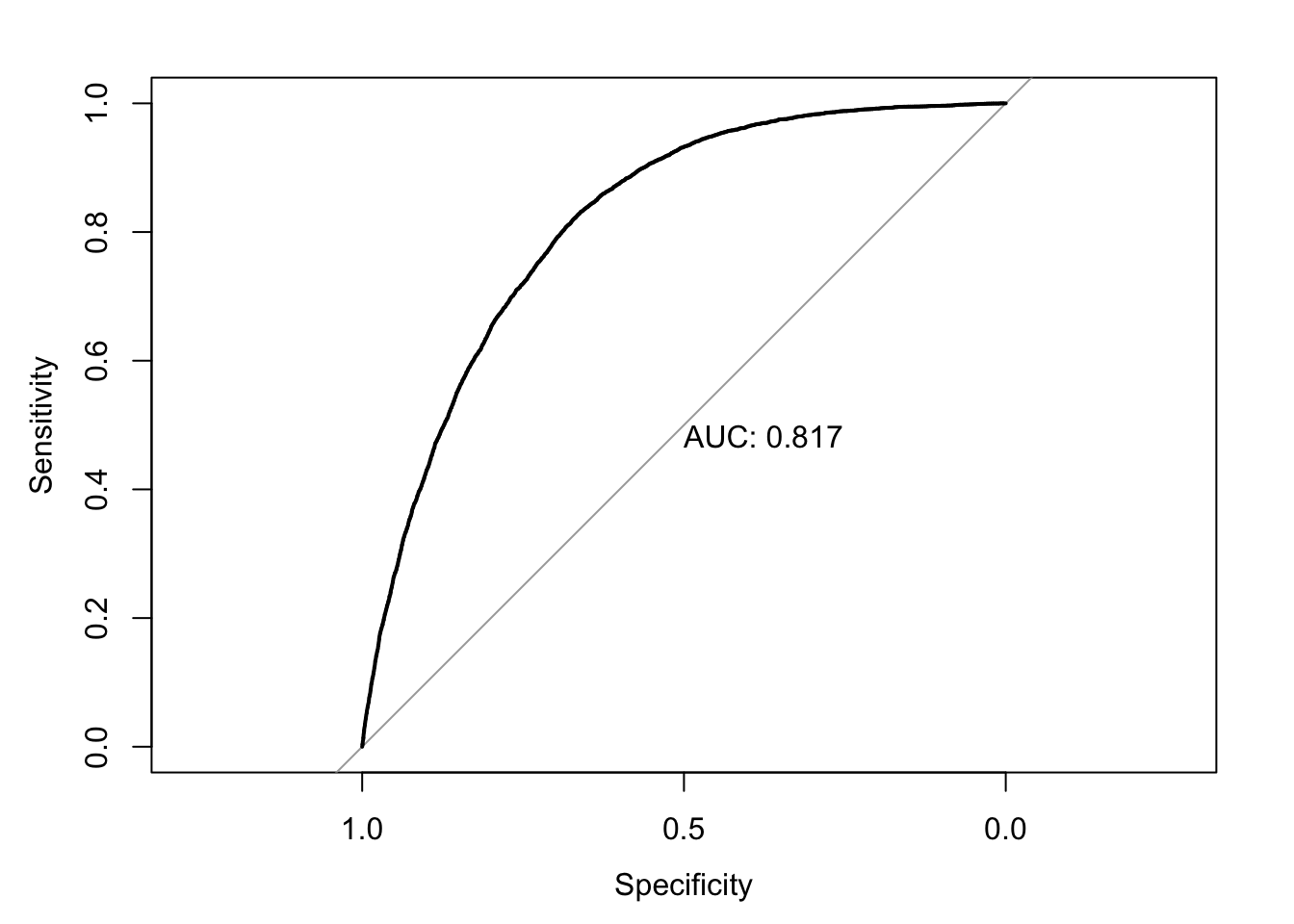

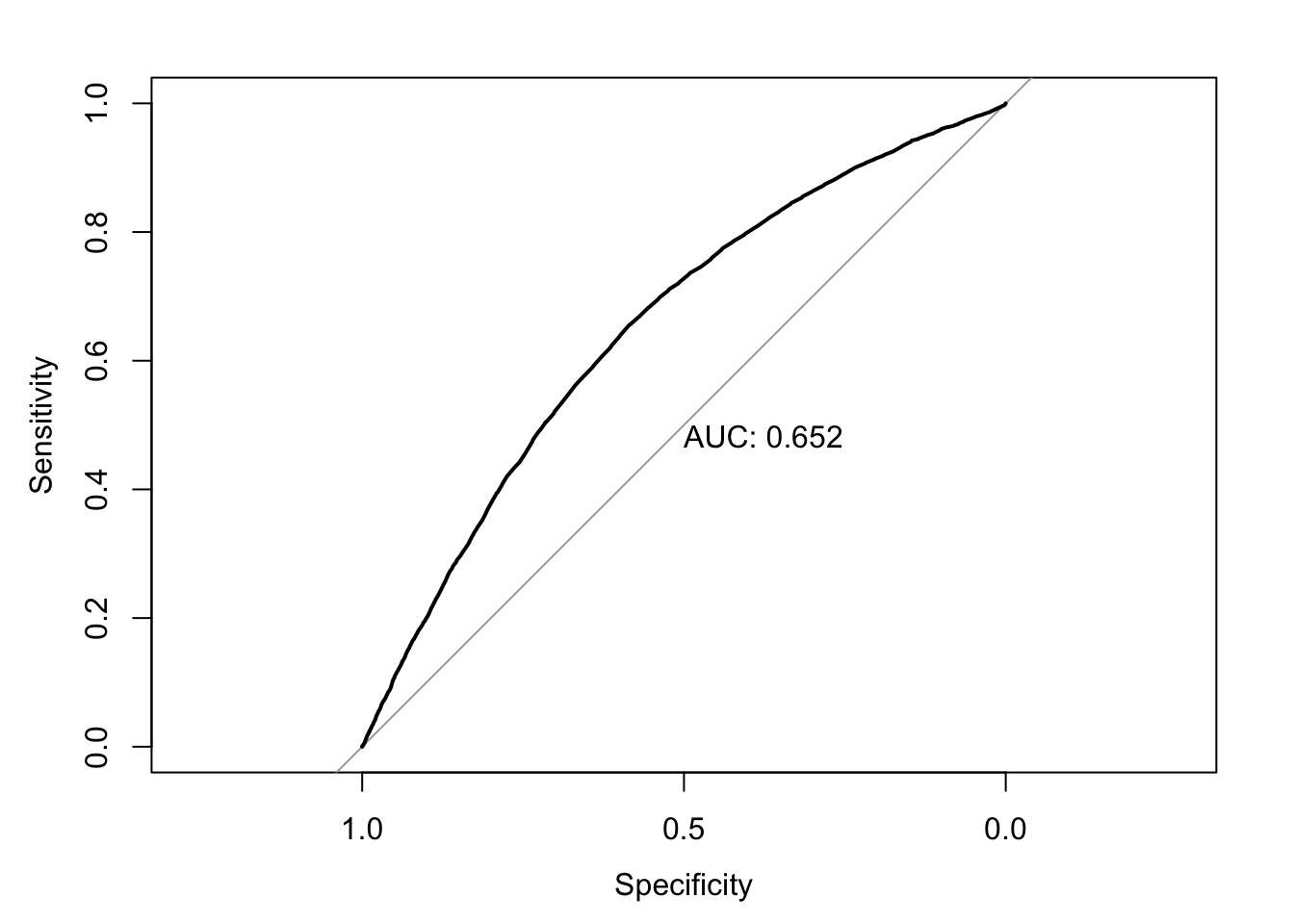

4.5 ROC Curve

The Receiver Operating Curve shows the performance of a model by plotting sensitivity vs specificity at different threshold values.

Sensitivity (AKA True Positive Rate) measures the proportion of true positives (samples correctly predicted as diabetes positive) to all the observed positives in the data. This can be written as \[\text{Sensitivity} = \frac{\text{True Positives}}{\text{True Positives + False Negatives}}\]

Specificity (AKA True Negative Rate) measures the proportion of true negatives (samples correctly predicted as diabetes negative) to all the observed negatives in the data. This can be written as \[\text{Specificity} = \frac{\text{True Negativites}}{\text{True Negativites + False Positives}}\]

As a model’s sensitivity increases, specificity will tend to decrease.

At different threshold values (for example, we typically use 0.5 for prediction, but could use any value from 0 to 1), we can calculate both sensitivity and specificity and plot these values on a curve.

The baseline curve used for comparison is just the line y=x. This is essentially modeling random prediction. The closer the curve is to the left and top edge, the better its performance, since it shows a high sensitivity without a huge drop off in specificity.

The AUC, or area under the curve, provides a single number to quantify this curve. The higher the performance of a model, the closer the ROC to the top left and the bigger the area under the curve. The ideal AUC, with both measurements always at 1 due to perfect predictions, would be 1. In practice, AUCs are typically in the range of 80-90.

roc_mod <- roc(predictor=pred_probs, response=clean_nhanes$cc_diabetes_num)Setting levels: control = 0, case = 1Setting direction: controls < casesplot(roc_mod, print.auc = TRUE)

roc_mod <- roc(predictor=pred_probs_simple, response=clean_nhanes$cc_diabetes_num)Setting levels: control = 0, case = 1

Setting direction: controls < casesplot(roc_mod, print.auc = TRUE)

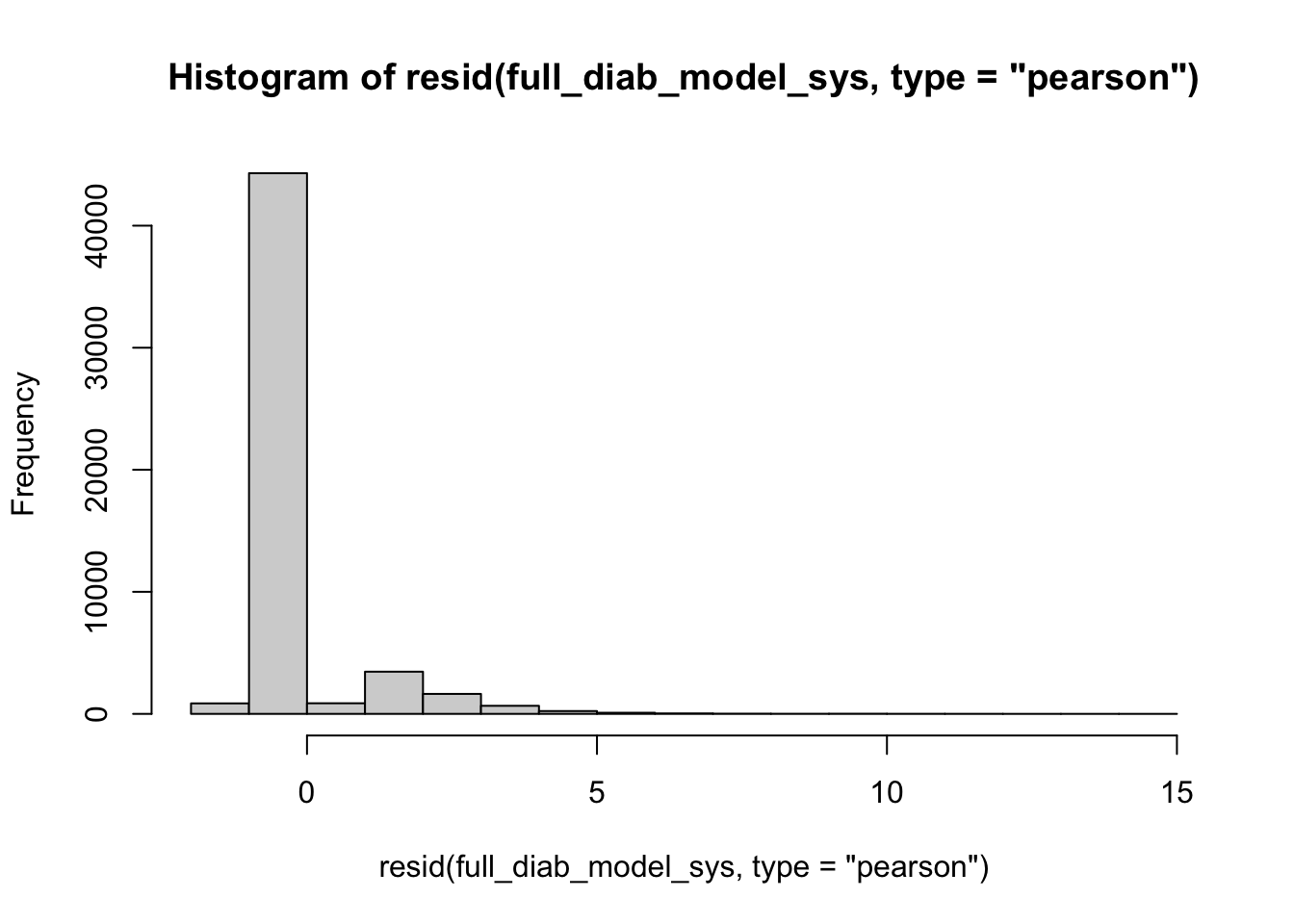

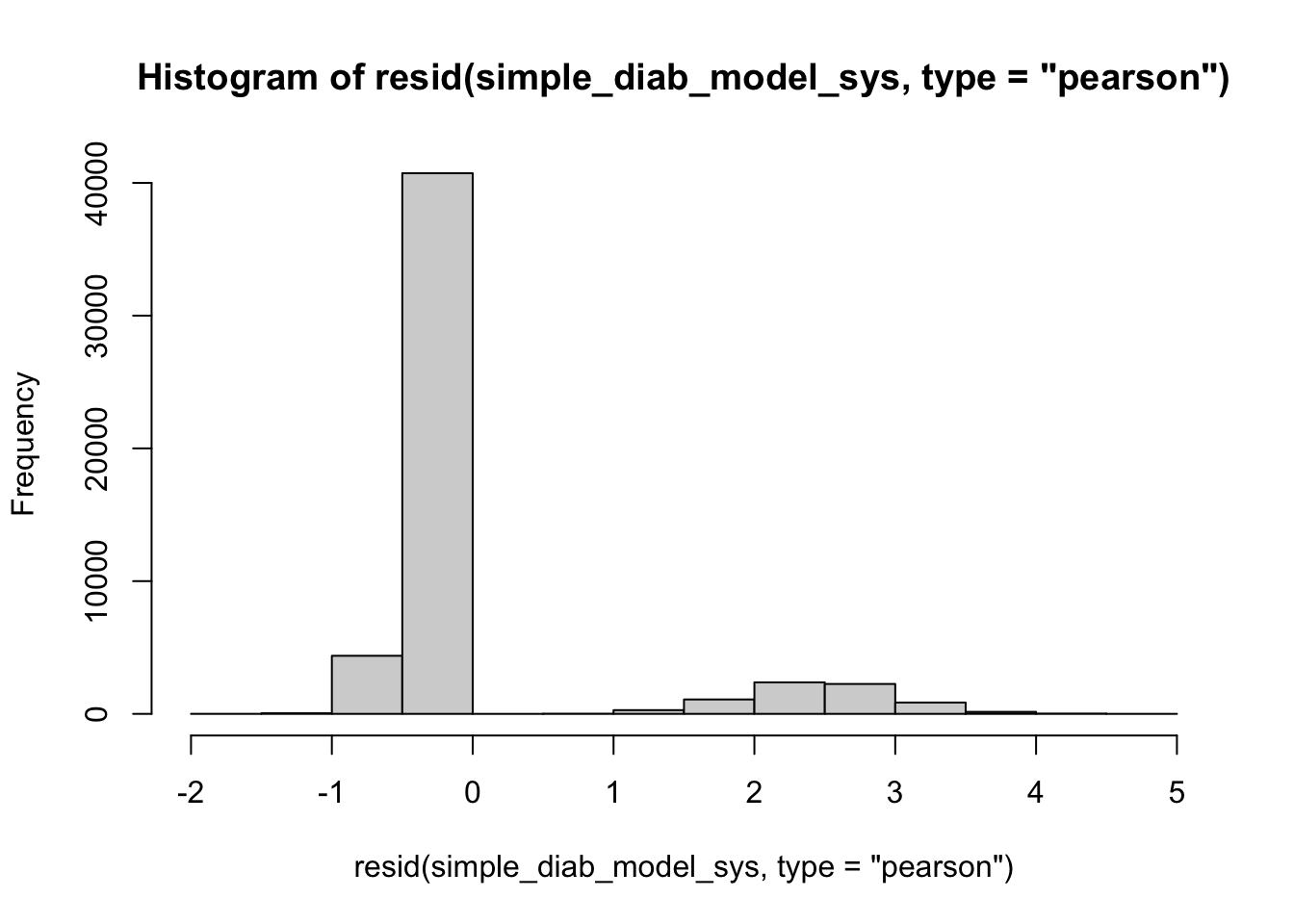

4.6 Residuals Histogram

A histogram of residuals should resemble a standard normal distribution, ideally. The histogram will plot the frequency of each pearson residual value. In an ideal model, the error between the predictions and observations (residuals) will be a result of random noise, creating a Gaussian shape. Skewness and irregularities may be a sign that the model is not fit properly.

hist(resid(full_diab_model_sys, type="pearson"), breaks = 20) #specify shape

lev <- hatvalues(full_diab_model_sys)

top_10 <- sort(lev, decreasing=TRUE)[1:10]

clean_nhanes[as.numeric(names(top_10))] %>% select(all_of(used_vars)) %>% kable()| cc_diabetes | bp_sys_mean | demo_age_years | demo_race | demo_gender | cc_bmi | cc_smoke | bp_med_use |

|---|---|---|---|---|---|---|---|

| No | 217.3333 | 51 | Other | Women | 35+ | Current | Yes |

| No | 266.0000 | 64 | Non-Hispanic Black | Women | 25 to <30 | Never | Yes |

| No | 125.3333 | 79 | Other | Men | 30 to <35 | Current | No |

| No | 102.0000 | 73 | Other | Women | 30 to <35 | Current | Yes |

| Yes | 201.3333 | 80 | Other | Men | 25 to <30 | Former | No |

| No | 270.0000 | 80 | Non-Hispanic White | Women | <25 | Current | Yes |

| No | 128.0000 | 78 | Other | Men | 30 to <35 | Current | No |

| Yes | 188.0000 | 61 | Other | Women | 30 to <35 | Former | Yes |

| No | 198.0000 | 69 | Other | Men | <25 | Current | Yes |

| Yes | 173.8333 | 60 | Other | Women | 30 to <35 | Current | Yes |

hist(resid(simple_diab_model_sys, type="pearson"), breaks = 20) #specify shape

lev <- hatvalues(full_diab_model_sys)

top_10 <- sort(lev, decreasing=TRUE)[1:10]

clean_nhanes[as.numeric(names(top_10))] %>% select(all_of(used_vars)) %>% kable()| cc_diabetes | bp_sys_mean | demo_age_years | demo_race | demo_gender | cc_bmi | cc_smoke | bp_med_use |

|---|---|---|---|---|---|---|---|

| No | 217.3333 | 51 | Other | Women | 35+ | Current | Yes |

| No | 266.0000 | 64 | Non-Hispanic Black | Women | 25 to <30 | Never | Yes |

| No | 125.3333 | 79 | Other | Men | 30 to <35 | Current | No |

| No | 102.0000 | 73 | Other | Women | 30 to <35 | Current | Yes |

| Yes | 201.3333 | 80 | Other | Men | 25 to <30 | Former | No |

| No | 270.0000 | 80 | Non-Hispanic White | Women | <25 | Current | Yes |

| No | 128.0000 | 78 | Other | Men | 30 to <35 | Current | No |

| Yes | 188.0000 | 61 | Other | Women | 30 to <35 | Former | Yes |

| No | 198.0000 | 69 | Other | Men | <25 | Current | Yes |

| Yes | 173.8333 | 60 | Other | Women | 30 to <35 | Current | Yes |

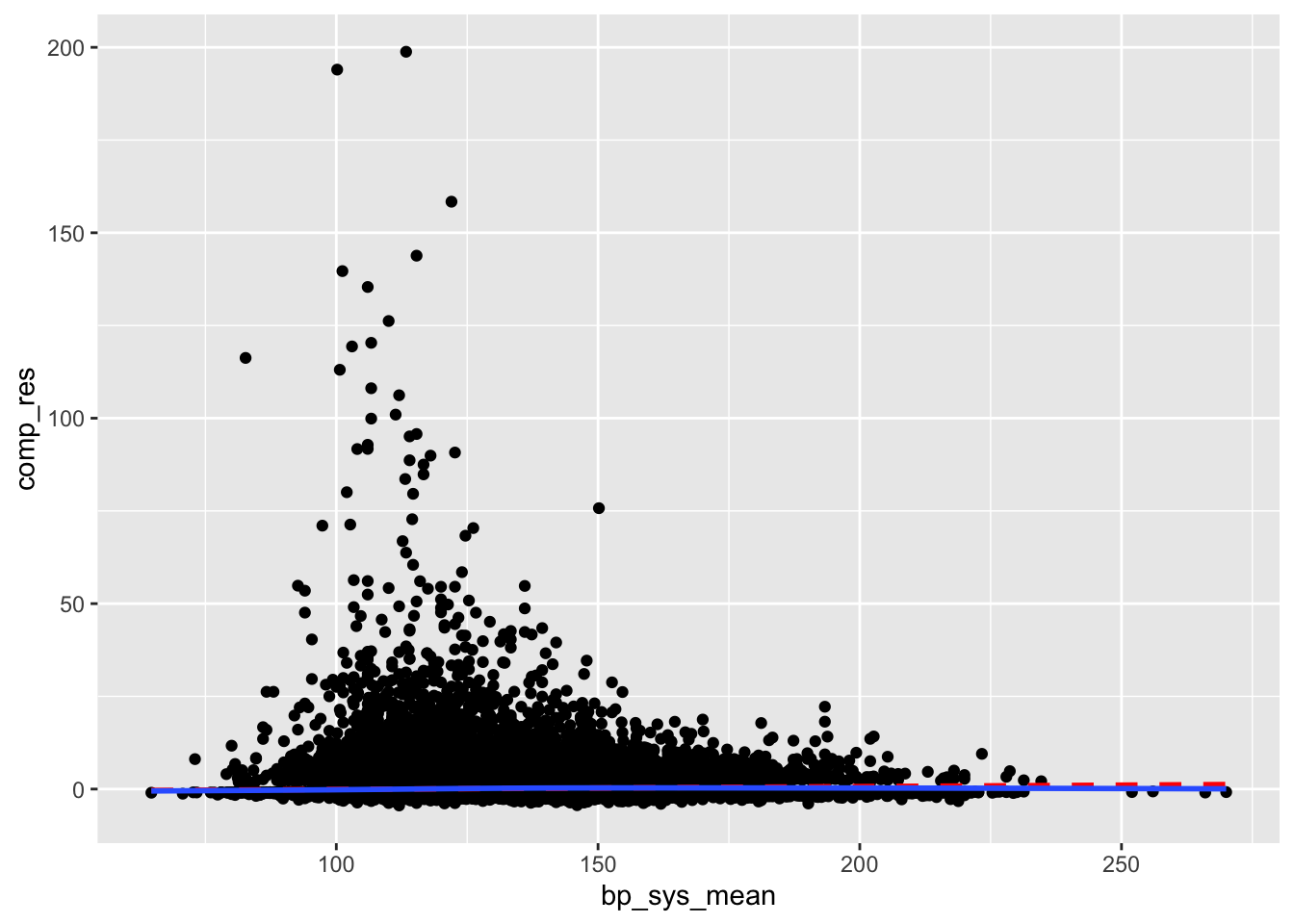

4.7 Ignore///

I followed a guide online but I don’t really know what this is doing:

clean_nhanes %>%

mutate(comp_res = coef(full_diab_model_sys)["demo_age_years"]*demo_age_years + residuals(full_diab_model_sys, type = "working")) %>%

ggplot(aes(x = demo_age_years, y = comp_res)) +

geom_point() +

geom_smooth(color = "red", method = "lm", linetype = 2, se = F) +

geom_smooth(se = F)`geom_smooth()` using formula = 'y ~ x'

`geom_smooth()` using method = 'gam' and formula = 'y ~ s(x, bs = "cs")'

clean_nhanes %>%

mutate(comp_res = coef(full_diab_model_sys)["bp_sys_mean"]*bp_sys_mean + residuals(full_diab_model_sys, type = "working")) %>%

ggplot(aes(x = bp_sys_mean, y = comp_res)) +

geom_point() +

geom_smooth(color = "red", method = "lm", linetype = 2, se = F) +

geom_smooth(se = F)`geom_smooth()` using formula = 'y ~ x'

`geom_smooth()` using method = 'gam' and formula = 'y ~ s(x, bs = "cs")'

Test for Multicollinearity

vif(full_diab_model_sys) GVIF Df GVIF^(1/(2*Df))

bp_sys_mean 1.169212 1 1.081301

demo_age_years 1.528167 1 1.236191

demo_race 1.207663 4 1.023866

demo_gender 1.091056 1 1.044536

cc_bmi 1.182024 3 1.028263

cc_smoke 1.173951 2 1.040908

bp_med_use 1.235766 1 1.111650Outliers

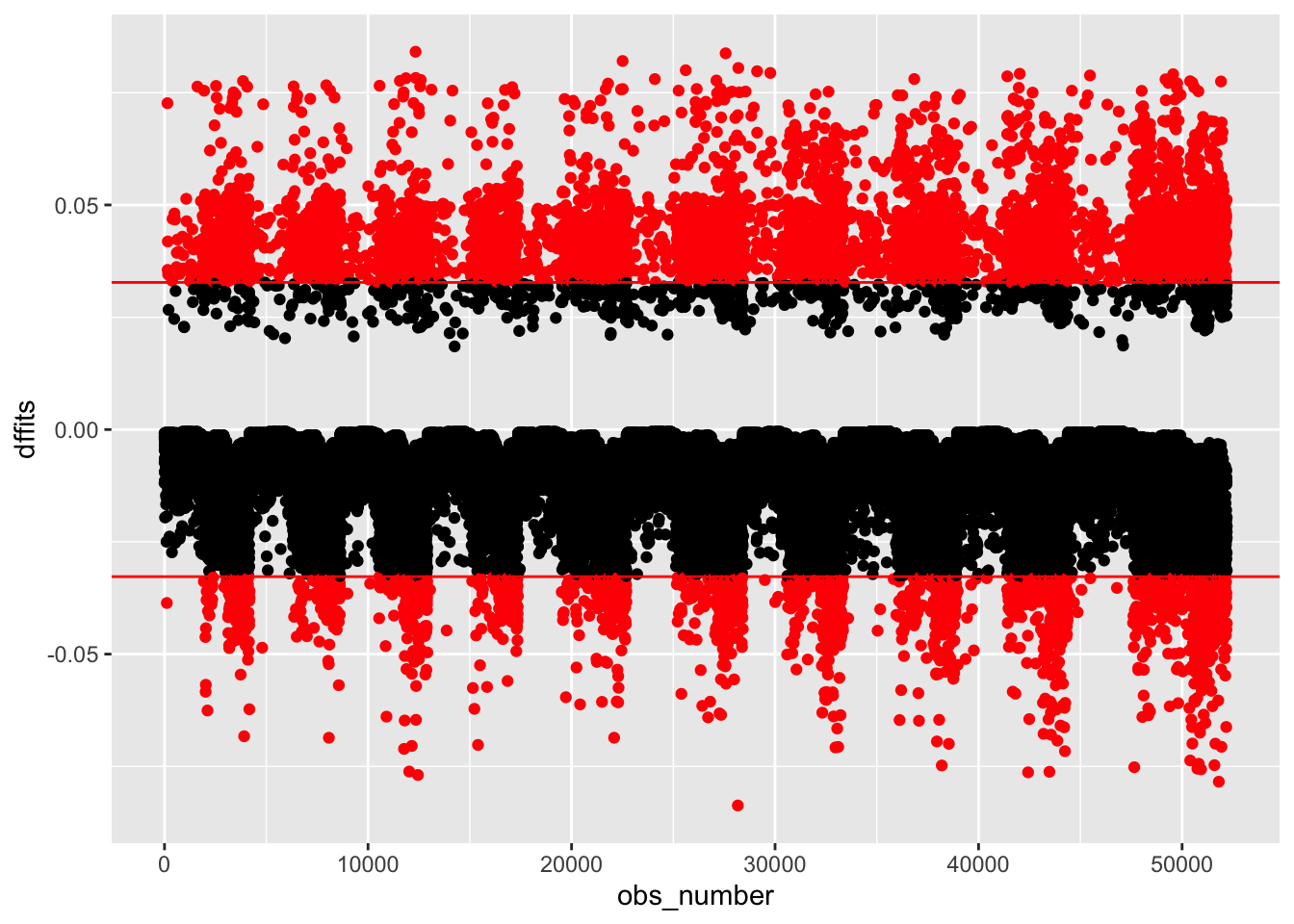

outlier_nhanes <-

clean_nhanes %>%

mutate(dffits = dffits(full_diab_model_sys))

outlier_nhanes %>%

mutate(obs_number = row_number(),

large = ifelse(abs(dffits) > 2*sqrt(length(coef(full_diab_model_sys))/nobs(full_diab_model_sys)),

"red", "black")) %>%

ggplot(aes(obs_number, dffits, color = large)) +

geom_point() +

geom_hline(yintercept = c(-1,1) * 2*sqrt(length(coef(full_diab_model_sys))/nobs(full_diab_model_sys)), color = "red") +

scale_color_identity()